Home » What is Maritime AI Object Detection

Object detection is one of the most fundamental and challenging problems in computer vision. It focuses on identifying and localising instances of predefined object classes (such as boats, humans, animals) in digital images.

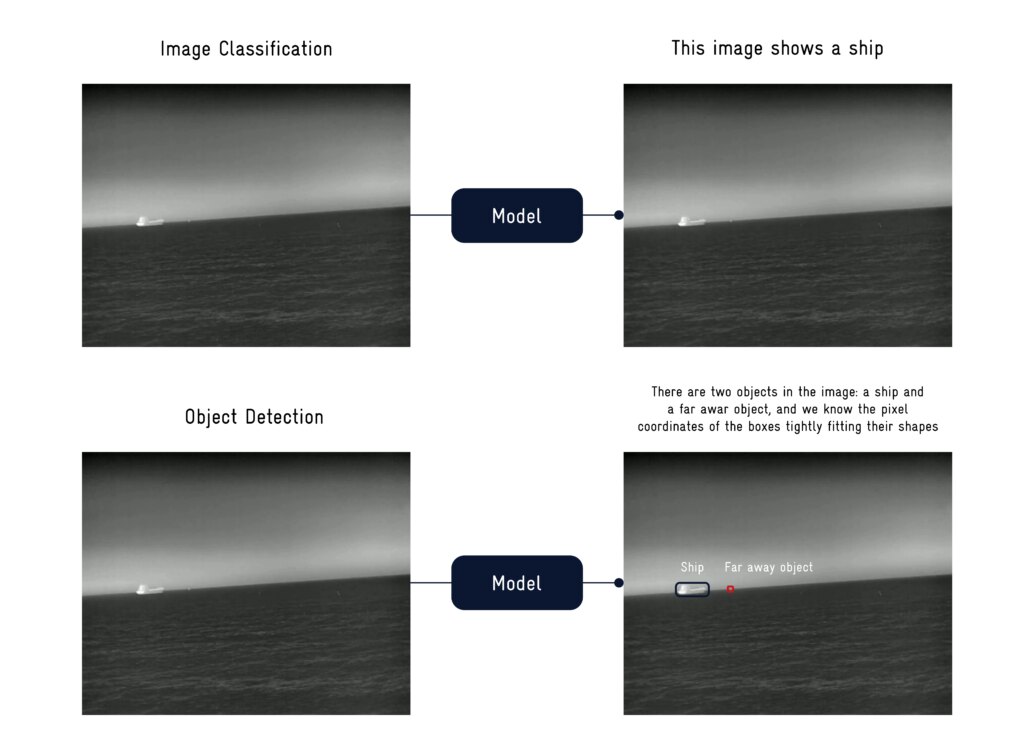

Compared to image classification, object detection provides more information about an image’s content. The following example demonstrates this.

Image classification would merely answer the question, “What object is in the image?” – it would say “ship” as an answer, if the model works well. If the model can predict several classes, it might even say “ship” and “far away object”, but that is the most information we can get from it.

In comparison, object detection would not only answer “What object is in the image?” but also “How many objects are in the image?” and “Where are the images located?”

Of course, this is a more useful answer in many real-world scenarios, such as collision avoidance.

These answers, also so-called predictions, are generated by models. Nowadays, the models are deep neural networks (DNNs).

Several types of DNNs exist, but two dominate tasks like object detection. The most foundational deep learning method for computer vision uses so-called Convolutional Neural Networks (CNNs).

Researchers invented the architecture of CNNs back in 1980. However, the breakthrough paper popularizing deep CNNs appeared only in 2012, introducing AlexNet.

Since then, CNNs have been a foundational ingredient in computer vision. Only a few years ago, in 2017, researchers at Google introduced the Transformer models, which became very popular for their strong detection performance.

Nevertheless, they have not fully replaced CNNs, as Transformers tend to be slower, require more data for training, and require more computational power.

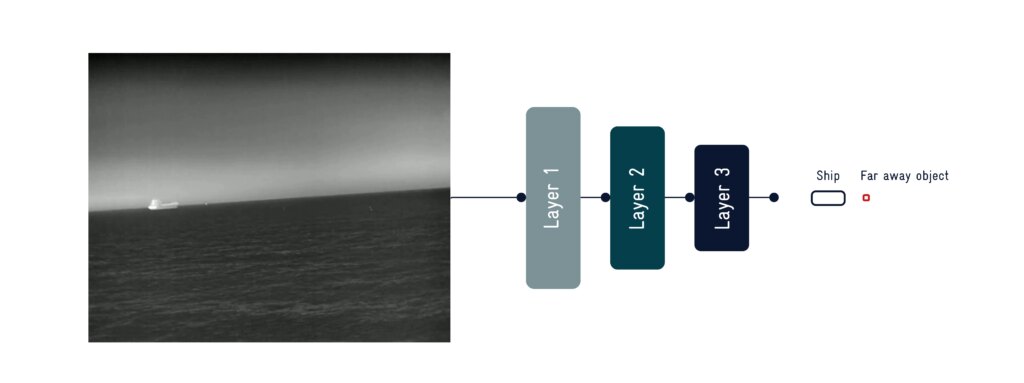

These models consist of several layers stacked on top of one another.

When you feed an image into the model, each layer processes it, and each layer feeds its output into the next.

The term “deep” in deep learning refers to the presence of a large number of such layers. The figure below shows a very simplified layout of how these layers stack.

Note that this is just a toy example; in practice, models contain many more layers, different kinds of layers, and other types of connections and processing operations.

In a very simplified sense, one can think of each layer as a calculation that uses addition and multiplication.

Each layer consists of multiple parameters, which are essentially simple numbers that these mathematical operations use.

In terms of the total number of parameters per model, DNNs in object detection can vary a lot – from 2.4M parameters (YOLO26 nano, a CNN-based model) up to 218M parameters (a DINO version using a SwinL backbone, a transformer-based model).

To obtain a model that makes good predictions, you must train it. Intuitively, during this process, the model learns what certain types of objects look like and how to correctly detect them.

In deep learning, data drives the training process. That means, instead of explicitly telling the model how certain types of objects look (essentially impossible), we give the model large amounts of data and the desired predictions (called ground truth).

Based on these two factors, the model learns to generate the best predictions. While this might sound a bit like magic, it’s essentially just math: the model learns optimal parameter values through a mathematical optimization process.

In effect, it learns a highly complex, non-linear mathematical function that best summarizes the given data. Not surprisingly, a high-quality dataset is essential for achieving a reliable model with correct detection results.

We typically divide the dataset into three subsets:

This separation enables objective performance measurement and supports iterative improvements in key aspects such as detection speed, robustness to multi-scale objects (varying sizes and distances), and overall precision.

To continuously improve model performance, the dataset must be expanded regularly. Data is collected in real operational environments and across diverse conditions to ensure robustness against a wide range of scenarios.

The full data collection process is detailed here: How do we Collect Data for AI training?

Especially in the maritime use case, object size presents a particular difficulty. Frequently, objects sit relatively far away, which causes them to appear with little detail in the image.

Consider the image below: objects clearly exist in each crop, but what categories do the objects correspond to?

After some time, one might guess that the right side shows a sailing boat and the left side shows a spherical buoy.

However, this is not a trivial distinction (same spherical shape, same color in LWIR images, etc.). Therefore, in such cases, having a large, high-quality dataset becomes especially important, so that the model can learn proper distinctions. Again, this shows why we put such a high emphasis on having a good dataset.

Object detection focuses on localizing (where?) and classifying (what?) all objects in an image. It does so by performing a complex mathematical operation, using the image as input.

While the mathematical operations are intricate and usually involve millions of parameters, the goal is simple: to robustly detect all objects in an image.

In our case, this serves as the basis for successful collision avoidance at sea.

AI object detection identifies and locates maritime objects using deep neural networks, enabling safer vessel navigation and collision avoidance.

For more than 40 years, Privilège Marine has built catamarans designed for serious bluewater cruising, where safety, robustness, and comfort are non-negotiable. Today, that commitment takes a new step forward.

We received the jury’s Special Mention at the EUROMARITIME Awards 2026. Moreover, this distinction was created by the jury to recognize technology that has demonstrated transformative impact on maritime detection and surveillance.