Home » Maritime Counter-USV Systems: How AI Optical Detection Closes the Threat Gap

The proliferation of unmanned surface vehicles (USVs) has fundamentally changed the threat calculus at sea.

Once the exclusive domain of well-resourced navies, USVs are now within reach of state actors, non-state groups, and commercial operators alike, operating as surveillance platforms, weapon carriers, and/or autonomous swarms designed to overwhelm traditional defence.

For naval forces, coast guards, and port security teams, the question is no longer whether USVs pose a threat. It is whether existing sensor systems are equipped to meet it.

The answer, in most cases, is not yet.

Effective counter-USV operations depend on a layered approach: detect, classify, track, and, if necessary, neutralise. Each step depends on the one before it. Miss the detection, and everything downstream fails.

Radar can detect surface contacts. But small, low-profile USVs, built with radar-absorbing materials or operated at low speeds, can slip through. AIS is a widely mandated technology carried by the vast majority of vessels, from large commercial ships down to small recreational craft. Availability is not the issue.

The problem is that AIS can be switched off or spoofed in seconds. A threat USV will simply not broadcast its location. And sonar, while invaluable for subsurface threats, tells you nothing about what is approaching on the surface.

The result is a detection gap. Bad actors exploit it. And conventional procurement has been slow to close it.

Electro-optical and infrared (EO/IR) sensors, fused with AI-driven computer vision, represent the most operationally relevant response to this gap. Unlike radar or AIS, they do not require a vessel to broadcast its identity or position. They detect what is physically present, in real time, day or night.

This matters enormously in counter-USV operations. The primary challenge is separating a threat contact from background clutter: waves, marine wildlife, debris, and legitimate commercial traffic. And doing so fast enough for a human operator or autonomous system to act.

Modern deep learning models classify a surface contact in seconds: vessel type, size, heading, and speed. They can distinguish between a fishing dinghy and an approaching RIB. They can flag a fast-moving contact converging on an asset. And they can do this continuously, without fatigue, across a full 360-degree field of view.

This is not a theoretical capability. It is deployed today.

At SEA.AI, we have been building AI-powered visual intelligence systems for maritime operations since 2018. Originally built for collision avoidance on recreational vessels, it has since proven directly applicable, and increasingly critical, in defence contexts.

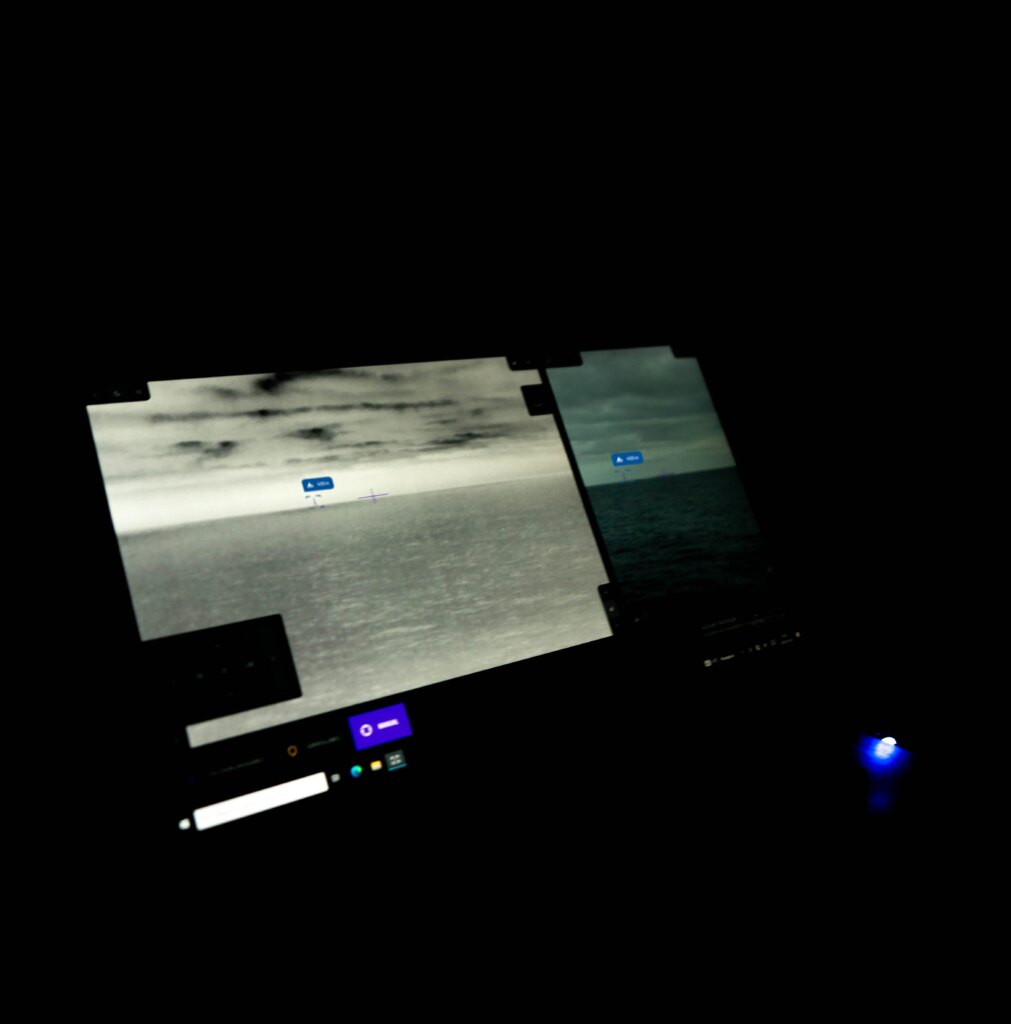

At its core, SEA.AI combines optical and thermal cameras with a deep learning engine trained on over 18 million annotated marine objects. The result is a perception layer that classifies non-cooperative contacts in real time, something radar and AIS cannot do.

SEA.AI’s Sentry detects small inflatable vessels, the class most used in asymmetric attacks, at up to 1.6 nautical miles. Larger motorboats and sailboats register at up to 4 nautical miles.

That detection window gives operators and autonomous systems the time needed to assess, respond, and coordinate.

SEA.AI systems output machine-readable data (object classification, relative bearing, estimated range) via a robust API. This plugs directly into existing command and control systems, autonomous navigation stacks, and remote operations centres.

There is no separate display to monitor. The intelligence flows where it is needed.

SEA.AI Watchkeeper is already deployed on the USV12, a 12-metre naval USV developed by VN Maritime and Havelsan. It provides fully integrated optical situational awareness for naval platforms and larger autonomous vessels.

It delivers reliable detection and tracking in GPS-denied, AIS-denied, and low-visibility conditions. These are precisely the environments where USV threats emerge.

SEA.AI’s systems were built to perform in real maritime environments, not laboratory conditions. They have been validated across diverse sea states, light conditions, and operational domains, from Arctic waters to tropical coastlines.

This matters because counter-USV operations rarely happen in ideal conditions. A threat USV is unlikely to approach on a clear day with calm seas. The value of an AI system is determined by its performance when conditions are worst, not when they are easiest.

SEA.AI’s growing footprint across European and US defence integrations reflects confidence in that real-world performance. The architecture scales from compact 4-metre surveillance drones to large naval surface combatants.

Effective counter-USV capability requires layered solutions. No single sensor solves the problem. But as the threat environment evolves (faster USVs, longer ranges, swarm tactics), the detection layer becomes the decisive one. You cannot neutralise what you have not detected and classified.

Optical AI does not replace radar or AIS. It closes the gap those systems leave open. For navies, coast guards, and port authorities facing an evolving USV threat, that gap closure is no longer optional.

SEA.AI exists to close it.

Radar can detect surface contacts, but small or low-profile USVs — particularly those designed with radar-absorbing materials or operating at low speeds — can fall below radar detection thresholds.

Radar also does not classify contacts; it shows a return, not a vessel type. AI-powered optical systems fill this gap by classifying what radar detects, and detecting what radar misses.

AI-powered computer vision analyses video feeds from optical and thermal cameras to detect, classify, and track surface objects in real time.

Trained on large datasets of annotated maritime imagery, these systems can distinguish between vessel types, flag anomalous contacts, and operate continuously without operator fatigue. SEA.AI’s deep learning engine, for example, draws on a database of over 18 million annotated marine objects to deliver accurate classification across diverse sea conditions.

Optical AI serves as the perception layer in a counter-USV stack, providing the classification intelligence that other systems depend on to make response decisions.

It does not replace kinetic or electronic countermeasures; it ensures those countermeasures are triggered by confirmed, correctly identified threats rather than false positives or missed contacts.

Radar sees a contact. AIS shows a name, provided the vessel chooses to broadcast one. When a threat USV does neither, optical AI is the only sensor layer that still sees it. That is the gap SEA.AI was built to close.

SEA.AI is growing in North America, new team, better support, wider availability. We’re strengthening our presence across North America with new talent, expanded support, and broader access to our safety systems.

Detection tells you what is on the water. Multi-Object Tracking tells you what each contact is, where it came from, and where it is going — for every object in the scene, simultaneously. That is the difference between a snapshot and operational intelligence.